Some brief notes from day 3 of NeurIPS 2018. Previous notes here: Expo Tutorials Day 2.

Reproducible, Reusable, and Robust Reinforcement Learning (Professor Joelle Pineau)

I was sad to miss this talk, but lots of people told me it was great so I’ve bookmarked it to watch later.

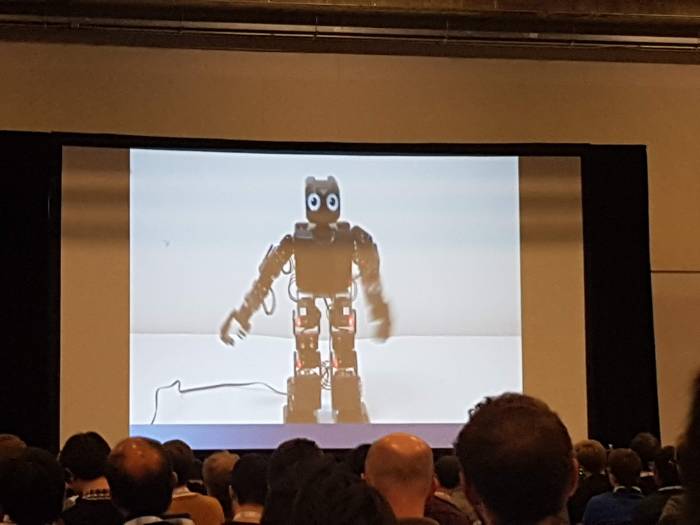

Investigations into the Human-AI Trust Phenomenon (Professor Ayanna Howard)

A few interesting points in this talk:

- Children are unpredictable, and working with data generated from experiments with children can be hard. (for example, children will often try to win at games in unexpected ways)

- Automated vision isn’t perfect, but in many cases it’s better than the existing baseline (having a human record certain measurements) and can be very useful.

- Having robots show sadness or disappointment turns out to be much more effective than anger for changing a child’s behaviour.

- Humans seem to inherently trust robots!

Two cool experiments:

To what extent would people trust a robot in a high-stakes emergency situation?

A research subject is led into a room by a robot, for a yet-to-be-defined experiment. The room fills with smoke, and the subject goes to leave the building. On the way out, the same robot is indicating a direction. Will the subject follow the robot?

It turns out that, yes, almost all of the time, the subject follows the robot.

What about if the robot, when initially leading the subject to the room, makes a mistake and has to be corrected by another human?

Again, surprisingly, the subject still follows the robot in the emergency situation that follows.

The point at which this stopped being true was when they had the robot point to obviously wrong directions (e.g. asking the subject to climb over some furniture).

This research has some interesting conclusions, but I’m not completely convinced. For one, based on the videos of the ’emergency situation’, it seems unlikely that any of the subjects would have believed the emergency situation to be genuine. The smoke is extremely localised, and the ‘exit’ just leads into another room. It seems far more likely to me that the subjects were trying to infer the researchers’ intentions for the study and decided to follow the robot since that was probably what they were meant to do.

Unfortunately this turns out to be because their ethics committee wouldn’t give them approval to run a more realistic version of the study in a separate building, which is a real shame. More on this study in the the paper, or in this longer write-up.

Does racism and sexism apply to people’s perception of robots?

Say we program a robot to perform a certain sequence of behaviours, and then ask someone to interpret the robot’s intention behind that behaviour. Will their interpretation be affected by a robot’s ‘race’ or ‘gender’?

It turns out that, yes, it is. For example, when primed to be aware of the ‘race’ of a robot, subjects are more likely to interpret the behaviour as angry when the robot is black than when the robot is white.

But when humans are not primed to pay attention to race (and just shown robots with different colours), the effect disappears. Paper here.

Full video of the talk below.